As the world of artificial intelligence continues to evolve, a significant development has emerged from Google DeepMind: the launch of Gemma 4, a family of state-of-the-art open models designed to enhance AI capabilities across various platforms.

Just before the official announcement, anticipation was building within the tech community. Developers and researchers were eager to see how this new suite of models would push the boundaries of AI technology.

On the day of the launch, it was revealed that Gemma 4 supports over 140 languages, making it one of the most versatile AI models available. This multilingual capability is crucial for global applications, allowing developers to reach a wider audience.

Gemma 4 is available under the Apache 2.0 license, promoting accessibility and collaboration among developers. This open-source approach encourages innovation, enabling users to build upon the existing framework.

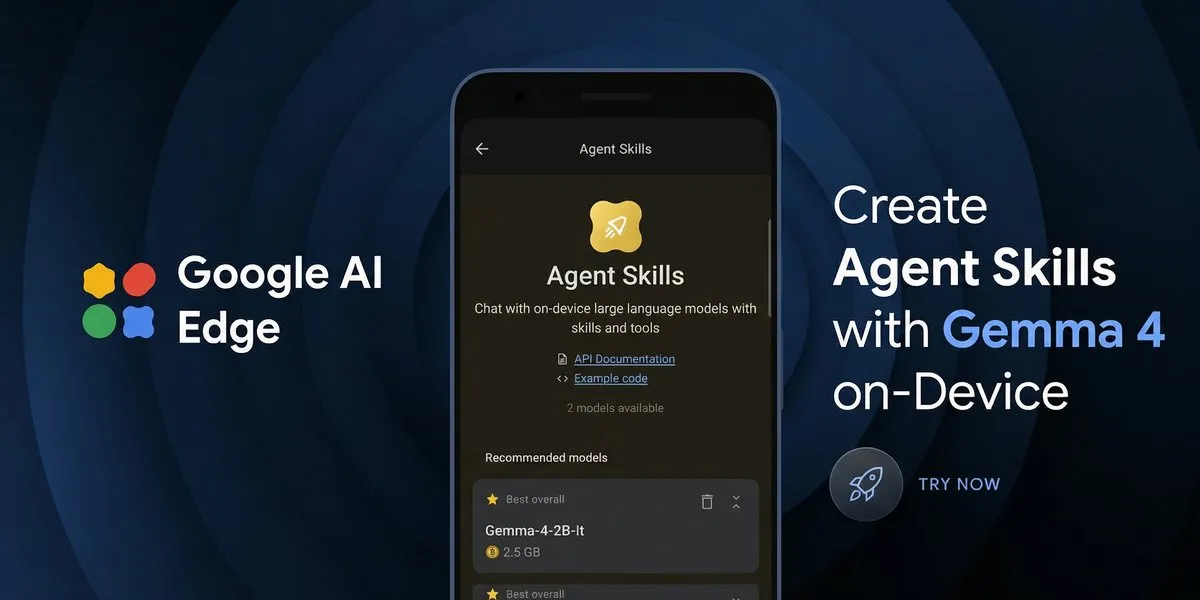

One of the standout features of Gemma 4 is its ability to perform multi-step planning and autonomous actions. This functionality allows developers to create sophisticated applications that can interact with various tools and APIs seamlessly.

Moreover, Gemma 4 models are optimized for efficient fine-tuning on a range of hardware, from billions of Android devices to developer workstations. This flexibility ensures that developers can leverage the power of AI regardless of their setup.

With a remarkable 128K context window, Gemma 4 can process long-form content effectively, catering to the needs of users who require in-depth analysis and comprehension.

In terms of performance, the models can achieve a prefill throughput of 133 tokens per second on devices like the Raspberry Pi 5, showcasing their efficiency even on lower-end hardware.

The E2B and E4B models specifically support native audio input for speech recognition, further enhancing the user experience by allowing for voice commands and interactions.

As of now, the Gemma 4 models, including the 26B and 31B versions, are being adopted by developers eager to harness their capabilities. The potential for creating autonomous agents that can perform complex tasks is particularly exciting.

This sequence of events matters significantly for those involved. Developers now have access to powerful, accessible, and open tools that can transform their projects and lead to groundbreaking innovations in AI.